The real cost of your next question may be flowing somewhere unseen.

Every time you type a prompt and wait for an answer, something far less visible begins to move. Not just code, not just electricity, but infrastructure humming in places most of us will never visit. Behind the illusion of instant intelligence lies an industrial choreography of servers, cooling systems, and resource demands that stretch far beyond the screen in your hand. As artificial intelligence scales at breathtaking speed across the United States, a quieter tension is forming beneath it, one that has little to do with algorithms and everything to do with what keeps them alive.

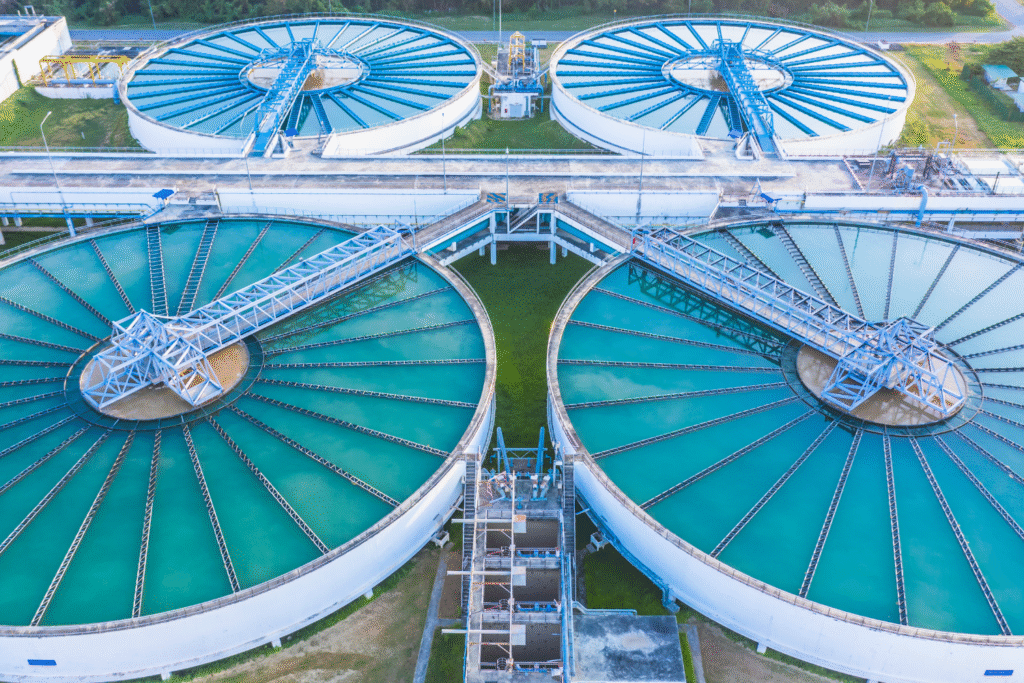

1. Data centres consume astonishing amounts of water for cooling.

Massive server farms dedicated to AI and cloud computing pump in water to cool relentlessly running equipment. According to research published by the Environmental & Energy Study Institute, some data centres in the U.S. withdraw billions of gallons per year, drawing from reservoirs that serve entire communities. The water is often used in evaporative cooling systems, meaning the withdrawal-to-return ratio is heavily skewed toward consumption. Once you know that one centre can guzzle what a small town uses, the scale of the issue stops feeling abstract.

2. AI-driven growth is putting fresh-water supplies at risk.

Many of these operations are sited in areas already stressed by drought or high water demand, as stated by analysts at Lawfare. In places like Virginia or Oregon, data-centre expansions have raised alarm among residents whose local water supply is thinning. Because water is drawn, sometimes from aquifers or river systems, the “hidden” cost of digital services becomes very real for people living in those watersheds. The story isn’t just about electrons, it’s about fluid rights and local resilience.

3. Each AI query may carry a measurable water footprint.

While per-query estimates vary widely, studies suggest that even small interactions with large language models consume measurable amounts of water. As discovered by MIT’s Climate Project, when you combine direct cooling, electricity generation and manufacturing back-ends, the cumulative water cost rapidly adds up. That means the innocent act of chatting with a bot isn’t entirely benign for water systems. Awareness doesn’t stop usage, but it changes how we think about what “free” digital services really cost.

4. Training AI models demands far more water than deploying them.

The massive runs of computing needed to train advanced models generate tremendous heat, and the cooling infrastructure must cope accordingly. As a result, the upfront “manufacture” of AI systems, training large neural networks, can consume far more water than day-to-day use. The entire lifecycle, from hardware production to model tuning, embeds water costs we rarely see on our end-user screens. It means the biggest water usage is upstream, making transparency all the more critical.

5. Location of data centres determines local water impact.

Where a data centre is built matters enormously for water stress. In arid regions or places with limited reinjection of used cooling water, the burden becomes higher. Some facilities are choosing sites near plentiful water, but that often means diverting resources from other users. Other sites opt for closed-loop or air-cooling, but those are exceptions. The decision of “where” becomes a decision of “who pays” in water terms and for many local communities, the bill is mounting.

6. Closed-loop cooling helps, but challenges remain.

Some operators are adopting technologies that recycle cooling water or switch to systems with minimal evaporation. These efforts reduce water draw but don’t eliminate the embedded water in electricity generation, hardware manufacture and heat rejection. While helpful, improvements in efficiency still don’t neutralise the scale of cumulative demand. In other words, making it “less bad” isn’t the same as making it “benign”, and we’re still far from the latter.

7. Indirect water use from energy production amplifies the burden.

Water isn’t only used in direct cooling. Many data-centres depend on power generated from thermoelectric plants or hydroelectric facilities, both of which consume water in significant amounts. So the water cost of a chatbot isn’t only the water you see, it’s the water connected to the electricity that powers the servers. The shadow water footprint extends deep into energy infrastructures and becomes harder to track or attribute.

8. Water usage disclosures by tech firms are still sparse.

Despite mounting concerns, many data-centre operators and tech firms do not publish detailed water-use data broken down by site or service. The lack of transparency makes it difficult for policymakers and the public to gauge whether water use is sustainable. With AI demand growing rapidly, this opacity may hamper efforts to ensure balance between digital growth and water stewardship. Without better data, the question of “How much is too much?” remains unanswered.

9. Strain on water-scarce communities is already happening.

In areas where water resources are tight, new data-centres have triggered local backlash or regulatory scrutiny. In Oregon’s The Dalles, for example, a single tech campus accounted for a quarter of the town’s water usage. When a community’s aquifer or river becomes the cooling loop for a global data centre, local rights and priorities come into tension. For some residents, the invisible cost of chatbots becomes very visible.

10. Sustainable AI might require rethinking both tech and water.

If we want chatbots and AI services to coexist with responsible resource use, we’ll need more than incremental efficiency. That means shifting models of deployment, choosing cooler climates, adopting non-evaporative cooling, prioritising renewable-powered centres, and possibly offsetting water use in critical watersheds. In other words, creating AI that doesn’t just work, but respects ecosystems too. Because the next time you log in to ask a question, the answer will come with a silent question of its own: “What did it cost behind the screen?”